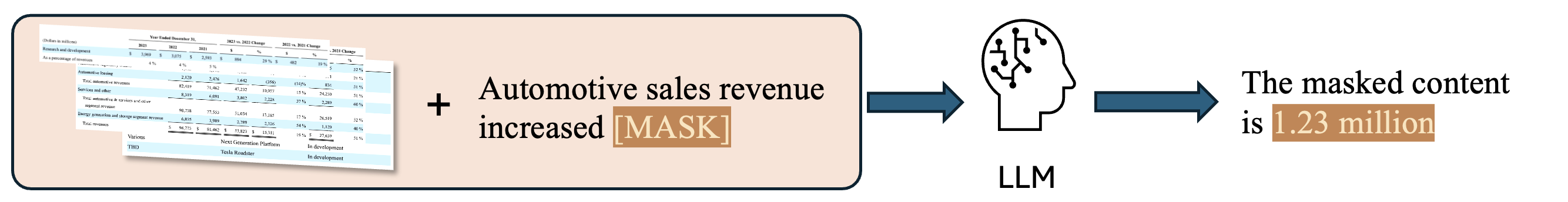

Financial applications demand trustworthy numerical reasoning. FAITH introduces a rigorous, scalable benchmark that probes

intrinsic hallucinations in LLMs by framing evaluation as masked span prediction over real 10-K filings.

The framework joins structured table context with neighboring sentences, revealing how models mis-handle values,

units, and cross-table calculations.

Contributions

- Automated dataset creation via principled masking of financial narratives.

- New hallucination benchmark built from S&P 500 annual reports.

- Cross-model study of intrinsic hallucination patterns across reasoning scenarios.